OpenClaw PR Review Automation: Push Merge Verdicts to Telegram

This OpenClaw use case shows how to automatically review pull request diffs, flag blocking issues, and send a clean merge verdict to Telegram so teams can move faster without losing review discipline.

0) TL;DR (3-minute launch)

- Many teams do not struggle with writing code—they struggle with slow pull request feedback loops.

- Workflow in short: Input → Process → Output → APPROVE / REQUEST_CHANGES / BLOCK.

- Guardrail: Never treat AI review as final approval. Keep human ownership for merge decisions.

- Review results weekly and refine prompts, thresholds, or routing rules.

1) What problem this solves

Many teams do not struggle with writing code—they struggle with slow pull request feedback loops. OpenClaw can automate first-pass PR review and send a concise verdict to Telegram. This reduces waiting time, keeps reviewers focused on high-impact decisions, and creates a consistent review format across PRs.

2) Who this is for (and not for)

- Fast-moving product teams shipping many PRs per week

- Remote teams that rely on Telegram for async coordination

- Founders and indie devs who want review consistency without heavy process

- Teams expecting auto-merge without human accountability

- Strict compliance environments where AI output cannot be used

- Repos without baseline test discipline or review checklists

3) Workflow map

4) MVP setup

5) Prompt template

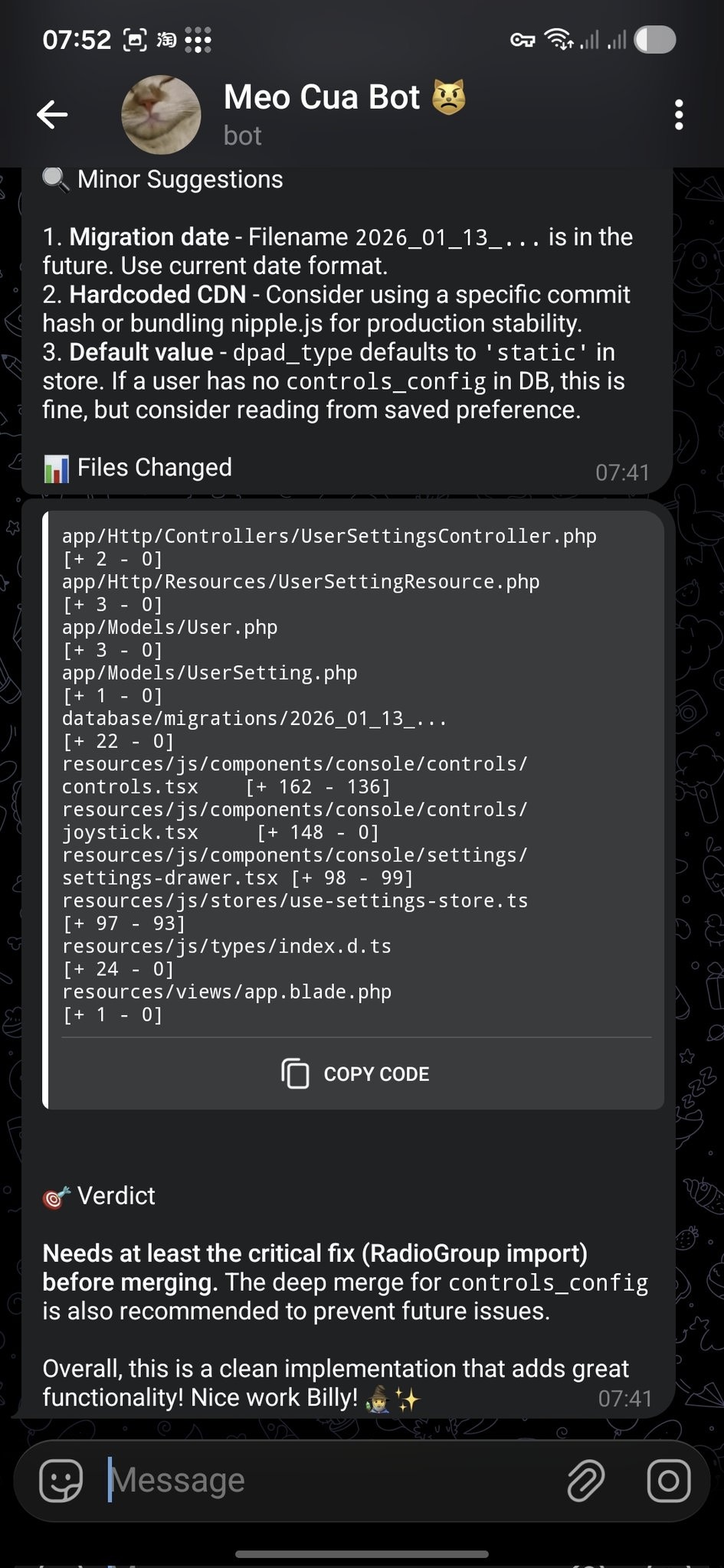

You are a senior code reviewer. Review the PR diff and output concise, actionable feedback. Goals: 1) Correctness: logic errors, edge cases, regressions 2) Security: auth, input validation, secret leakage 3) Maintainability: complexity, naming, duplication 4) Testability: missing tests, fragile behavior Output format: - Verdict: [APPROVE | REQUEST_CHANGES | BLOCK] - Blocking Issues (max 3): - [file/location] issue + risk + fix - Suggestions (max 5): - practical improvements - Quick Patch Plan (3 steps): - exact next actions for PR author Constraints: - no generic advice - each point must be directly actionable - when context is missing, state what is needed

6) Cost vs. payoff

Setup cost

~1-2 hours for first rollout (integration, prompt tuning, notification format).

Operating cost

Model usage scales with PR volume. Keep outputs short to control spend.

Payoff

Faster review cycles, less context switching, and cleaner reviewer handoff.

7) Risk boundaries

- Never treat AI review as final approval. Keep human ownership for merge decisions.

- Use least-privilege access tokens. Scope by repo and operation.

- Protect private code. Send summary-level findings to Telegram, not full sensitive diffs.

8) From MVP to production

- Use repository-specific review profiles (frontend/backend/infra)

- Add severity routing: block-level alerts ping owners immediately

- Track suggestion acceptance rates to calibrate your prompt over time

- Combine with CI status so test failures increase risk confidence

9) Related use cases

Source links

To keep this page visual and bookmark-friendly, here are direct references from community sources:

- OpenClaw Showcase page

- Awesome OpenClaw Use Cases — Showcase-first (no dedicated Awesome entry)

- PR Review → Telegram source post

- OpenClaw in Action (YouTube)

FAQ

Can OpenClaw replace human review?

No. It should accelerate first-pass triage, not replace final ownership.

How fast can this go live?

Most teams can launch an MVP in one afternoon.

How to reduce false positives?

Limit output length, scope by file type, and tune prompts with reviewer feedback.

What should I read after the first successful setup?

Use the command reference, Telegram setup guides, and adjacent build workflows so the review flow can grow without turning into an opaque black box.